shu.b@northeastern.edu

Hi! I am Bangzhao Shu (舒邦照), currently a second year Ph.D. student in Computer Science at the Khoury College of Computer Sciences at Northeastern University. I am a member of the CSG Lab and am advised by Professor Mai ElSherief. Before starting my Ph.D., I earned dual master’s degrees in Information and Environment & Sustainability from the University of Michigan. I worked under the guidance of professor David Jurgens at UMSI.

Broadly, my research lies at the intersection of human-centered NLP and LLM safety. My current research focuses on the following themes: (1) Emotional and Social Intelligence in LLMs;(2) Mechanistic Interpretability of LLMs; (3) Alignment and Safety of LLMs

Publications

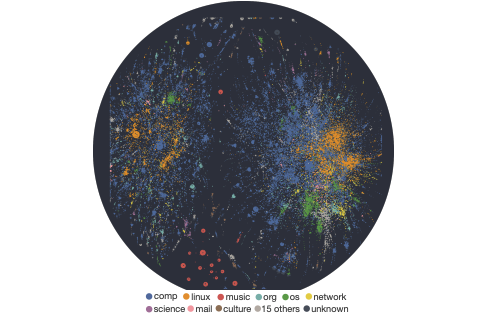

Causally Modeling the Linguistic and Social Factors that Predict Email Response

Yinuo Xu*, Hong Chen*, Sushrita Rakshit*, Aparna Ananthasubramaniam*, Omkar Yadav*, Mingqian Zheng*, Michael Jiang*, Lechen Zhang*, Bowen Yi*, Kenan Alkiek*, Abraham Israeli*, Bangzhao Shu*, Hua Shen*, Jiaxin Pei*, Haotian Zhang*, Miriam Schirmer*, David Jurgens

Arxiv

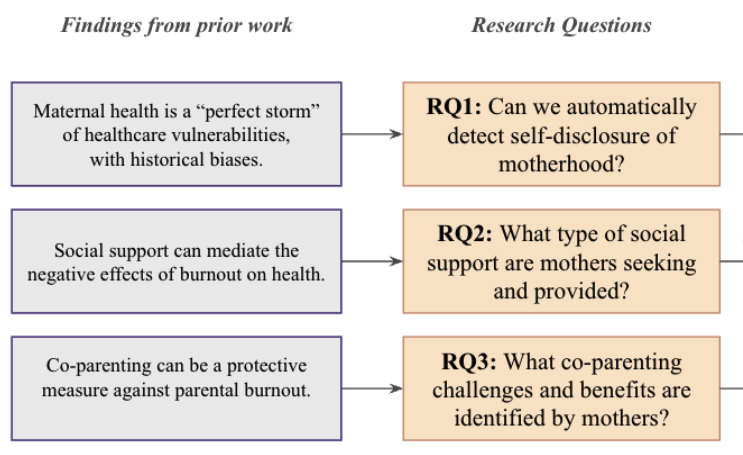

"He gets to be the fun parent": Understanding and Supporting Burnt-Out Mothers in Online Communities

Nazanin Sabri, Ananya Malik, Bangzhao Shu, Jason Snyder, Laurie Kramer, Mai Elsherief

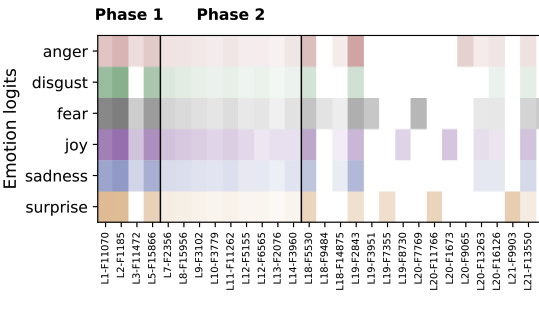

From Syntax to Emotion: A Mechanistic Analysis of Emotion Inference in LLMs

Bangzhao Shu, Arinjay Singh, Mai ElSherief

Education

Ph.D. in Computer Science, Northeastern University, Expected 2024–2029

M.S. in Information, University of Michigan, 2022–2024

M.S. in Environment & Sustainability, University of Michigan, 2021–2024

B.E. in Geomatics, Nanjing Normal University, 2016–2020

Teaching

Teaching Assistant: CS 6120 Natural Language Processing, Northeastern University, Winter 2025

News

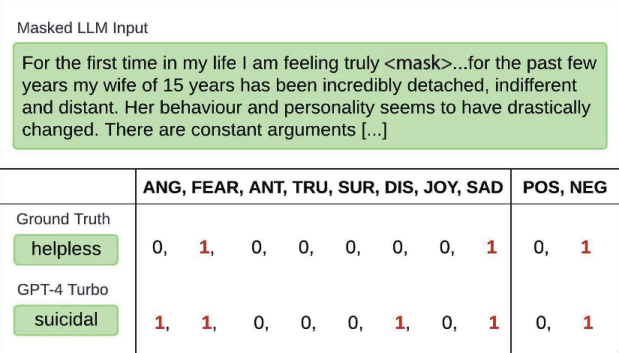

July 2025: Our new paper Fluent but Unfeeling: The Emotional Blind Spots of Language Models was accepted to ICWSM 2026! We benchmark a fine-grained emotion recognition dataset EXPRESS with 251 unique emotion labels. We systematically tested LLMs and found that it remains challenging for them to align with human on emotion nuances.

May 2025: Our new paper Causally Modeling the Linguistic and Social Factors that Predict Email Response was accepted to NAACL 2025!

Sep 2024: Started my new journey at Northeastern University!

May 2024: I graduated with a Master of Science in Information and a Master of Science in Geospatial Data Science from the University of Michigan!

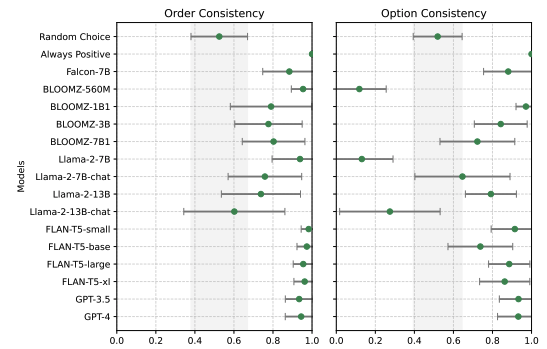

April 2024: Our paper You don’t need a personality test to know these models are unreliable: Assessing the Reliability of Large Language Models on Psychometric Instruments was accepted to NAACL 2024! In this work, we construct a dataset covering 39 psychometric instruments across 115 persona axes. We show that current question-style prompts are insufficient to capture model perceptions reliably -LLMs are highly sensitive to simple perturbations and exhibit low consistency under negation.